Quick description

A common mistake people make when trying to answer a mathematical question is to work from first principles: it is almost always easier to modify something you already know. This article illustrates the point with examples that range from simple arithmetic to the forefront of research.

|

Contents

Example 1: multiplying two-digit numbers together

Example 2: convergence of sequences

Example 3: convergence of series

Example 4: the matrix of a rotation

Example 5: the set of invertible  matrices is open

matrices is open

Example 1: multiplying two-digit numbers together

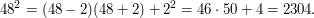

What is  ? The obvious way of answering this question is to use your favourite multiplication technique to work it out. For example, you might say that it is

? The obvious way of answering this question is to use your favourite multiplication technique to work it out. For example, you might say that it is  . However, if you are keen on mathematics, then this probably won't be the best way of going about it, since you are likely to know many "nearby" facts and it is a pity to waste that knowledge. For example, if you know the squares of all numbers up to 20, or at least if you know that

. However, if you are keen on mathematics, then this probably won't be the best way of going about it, since you are likely to know many "nearby" facts and it is a pity to waste that knowledge. For example, if you know the squares of all numbers up to 20, or at least if you know that  (which perhaps you know because you like powers of 2), then you can reason as follows: if sixteen sixteens are 256, then I get to seventeen sixteens by adding another 16, which takes me to 272.

(which perhaps you know because you like powers of 2), then you can reason as follows: if sixteen sixteens are 256, then I get to seventeen sixteens by adding another 16, which takes me to 272.

This very simple example illustrates an important principle in mathematics: even if getting from A to C is hard, you may know how to get to a point B from which getting to C is easy. This phenomenon shows up strongly in mental arithmetic: if you are asked to multiply two two-digit numbers together in your head, and have a reasonable "web of number facts" at your disposal, then it is almost always better to use tricks like the one in the previous paragaph. (Another approach would have been to multiply 17 by 2 four times, getting the sequence 34, 68, 136, 272.)

More mental-arithmetic examples

What other "number facts" might one know? One that many people are aware of is the amusing fact that  . This makes multiplying by

. This makes multiplying by  pretty easy. For example, if you need to work out

pretty easy. For example, if you need to work out  you can use the fact that

you can use the fact that  , so

, so  . Or if you wanted to work out

. Or if you wanted to work out  you could first work out

you could first work out  , getting

, getting  . Then you could add

. Then you could add  to it and deduce that

to it and deduce that  . And finally you could add a further

. And finally you could add a further  to get

to get  , which is the answer to the question asked.

, which is the answer to the question asked.

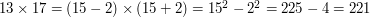

Another useful principle is that  . This means that if you know your squares, then you can multiply together any two numbers that are reasonably close together. For example,

. This means that if you know your squares, then you can multiply together any two numbers that are reasonably close together. For example,  . What about

. What about  ? We could first work out

? We could first work out  , getting

, getting  (by the difference-of-squares method) and then add another

(by the difference-of-squares method) and then add another  to get

to get  (because it's

(because it's

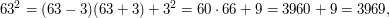

A less-known trick is that this principle also works "backwards", allowing us to easily compute any square using the equation  . We just need to pick a suitable

. We just need to pick a suitable  for which one of

for which one of  and

and  becomes simple. For example, we have

becomes simple. For example, we have

and also

In all these cases, the basic method is to get what one might think of as a "first approximation" to the calculation and then adjust it.

Example 2: convergence of sequences

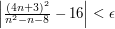

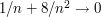

Suppose that you are asked to prove completely rigorously that  tends to

tends to  as

as  tends to infinity. Some people think that the words "completely rigorously" are an instruction to go back to first principles and produce a proof that starts like this. "Let

tends to infinity. Some people think that the words "completely rigorously" are an instruction to go back to first principles and produce a proof that starts like this. "Let  . We shall show that there exists an

. We shall show that there exists an  such that

such that  whenever

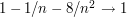

whenever  ." But that is not how to go about it. A much better way is to begin by dividing top and bottom by

." But that is not how to go about it. A much better way is to begin by dividing top and bottom by  . That gives us

. That gives us  . Once we have written the expression in this way, we can deduce that it converges to

. Once we have written the expression in this way, we can deduce that it converges to  just from the fact that

just from the fact that  tends to zero and from standard theorems about limits. Those theorems are the ones that say that if

tends to zero and from standard theorems about limits. Those theorems are the ones that say that if  and

and  , then

, then  ,

,  ,

,  and (if

and (if  )

)  . So from the fact that

. So from the fact that  we get that

we get that  , so

, so  , so

, so  , so

, so  . Similarly,

. Similarly,  , so

, so  , so

, so  . And finally, that implies that

. And finally, that implies that  .

.

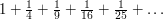

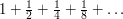

Example 3: convergence of series

How does one show that the series  converges? That is, how does one show that as you keep on adding reciprocals of squares, the resulting sum doesn't go off to infinity? Convergence of infinite series is usually one of the first topics covered in a first course in real analysis, and one of the messages one needs to learn is that the best way of proving that a given series converges is usually not to start from scratch, but rather to deduce the convergence from the fact that some other series is known to converge.

converges? That is, how does one show that as you keep on adding reciprocals of squares, the resulting sum doesn't go off to infinity? Convergence of infinite series is usually one of the first topics covered in a first course in real analysis, and one of the messages one needs to learn is that the best way of proving that a given series converges is usually not to start from scratch, but rather to deduce the convergence from the fact that some other series is known to converge.

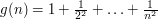

In this case, the difficulty is that there doesn't seem to be a nice expression for the function  . But perhaps we should think about that. What do we know about

. But perhaps we should think about that. What do we know about  ? Well, from its definition we know that

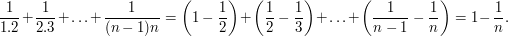

? Well, from its definition we know that  Applying the advice that one should hunt for analogies, we might recall that taking the difference

Applying the advice that one should hunt for analogies, we might recall that taking the difference  is a bit like taking the derivative of

is a bit like taking the derivative of  . And if we did take the derivative of

. And if we did take the derivative of  and obtained

and obtained  , then

, then  would have had the form

would have had the form  .

.

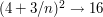

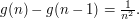

Now let us turn that on its head and see what  is if

is if  for every

for every  . The answer is

. The answer is  . And turning that on its head, we see that the series

. And turning that on its head, we see that the series  can be analysed easily, because

can be analysed easily, because

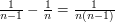

But  , so from this it follows that

, so from this it follows that  . Since that is true for every

. Since that is true for every  , the series

, the series  converges and its limit is less than 2.

converges and its limit is less than 2.

The point here is that we deduced the convergence of the series in an easy way from the convergence of a series that can be shown easily to converge.

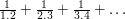

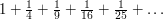

You might object that you prefer a different proof that the series converges. For example, another well-known proof is to group the terms of the series as follows:

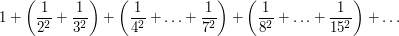

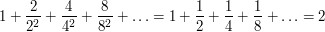

This is clearly less than what you get if you replace each fraction in a group by the largest fraction in that group. And what you get is

So the original series converges and its limit is less than 2.

However, if you prefer this proof, that doesn't imply that you like starting from scratch. This proof also uses what you know (that the series  converges) to help you show something you don't know (that the series

converges) to help you show something you don't know (that the series  converges).

converges).

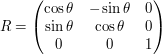

Example 4: the matrix of a rotation

Here is a well-known matrix calculation: to find the matrix of a rotation through  about a unit vector

about a unit vector  in

in  . The direct starting-from-scratch approach would be to work out what happens to the vectors

. The direct starting-from-scratch approach would be to work out what happens to the vectors  ,

,  and

and  under this rotation. However, that is quite a difficult calculation. A much better approach is to exploit the fact that we can write down the answer easily if we are lucky enough that

under this rotation. However, that is quite a difficult calculation. A much better approach is to exploit the fact that we can write down the answer easily if we are lucky enough that  is the vector

is the vector  . Then the rotation is just a rotation of the

. Then the rotation is just a rotation of the  -plane, so it has matrix

-plane, so it has matrix  . Now all we have to do is come up with the matrix

. Now all we have to do is come up with the matrix  of a rotation that takes

of a rotation that takes  to

to  and calculate

and calculate  . Why? Because the corresponding linear map moves

. Why? Because the corresponding linear map moves  to

to  , then rotates everything through

, then rotates everything through  about

about  , then moves

, then moves  back to

back to  again: the total effect is a rotation through

again: the total effect is a rotation through  about

about  .

.

How do we find a rotation that takes  to

to  ? Well, the matrix of such a rotation has

? Well, the matrix of such a rotation has  as its third column. So all we need to do is find two other unit column vectors

as its third column. So all we need to do is find two other unit column vectors  and

and  that are orthogonal to each other and to

that are orthogonal to each other and to  , and the matrix formed by

, and the matrix formed by  ,

,  and

and  will be the matrix of a rotation that takes

will be the matrix of a rotation that takes  to

to  (unless it has determinant

(unless it has determinant  , in which case swap the first two columns). There is still a bit of calculation involved here, but it is pretty straightforward.

, in which case swap the first two columns). There is still a bit of calculation involved here, but it is pretty straightforward.

Example 5: the set of invertible  matrices is open

matrices is open

The set of invertible  matrices can be thought of as a subset of

matrices can be thought of as a subset of  , and this subset turns out to be open. In other words, if you take any invertible matrix and change its entries by a small enough amount, then the perturbed matrix will also be invertible.

, and this subset turns out to be open. In other words, if you take any invertible matrix and change its entries by a small enough amount, then the perturbed matrix will also be invertible.

How should one prove this? The obvious approach is to dive straight in. If  is our invertible matrix then we let

is our invertible matrix then we let  be a small positive constant (to be chosen later) and try to prove that any matrix

be a small positive constant (to be chosen later) and try to prove that any matrix  such that

such that  for every

for every  and

and  is also invertible. But how do we find the inverse of

is also invertible. But how do we find the inverse of  ?

?

What we have just done is work directly with the definitions of "open set" and "invertible". However, if we are prepared to use a little bit of theory, the problem becomes dramatically easier. First of all, what are some fundamental facts about open sets? One is that they are complements of closed sets. Another is that the inverse image of an open set under a continuous function is open. If we use the first of these, we transform our problem into that of showing that the set of non-invertible matrices is closed. We could then use the sequence definition of closed sets and try to prove that a limit of non-invertible matrices is non-invertible. But there doesn't seem to be an obvious way to do that – or at least not yet. If we think about the second property of open sets, then we see that one way of proving that our set is open is to find a continuous function  from the set of all

from the set of all  matrices to some other space

matrices to some other space  such that there is an open subset

such that there is an open subset  of

of  with

with  equal to the set of all invertible matrices.

equal to the set of all invertible matrices.

It's not instantly obvious what such a function might be, so let us turn to the word "invertible". What important facts do we know about invertible matrices? One of them is that a matrix is invertible if and only if its rank is  . Another is that it is invertible if and only if it has non-zero determinant.

. Another is that it is invertible if and only if it has non-zero determinant.

At this point a lightbulb should go on. We were looking for a continuous function defined on matrices, and the determinant is a function defined on matrices. Is it continuous? Yes, since it is a polynomial function of the entries of the matrix. Can we express the set of invertible matrices as the inverse image of an open set? Yes, since they are precisely the matrices with determinant belonging to the set of non-zero real (or complex if we are talking about complex matrices) numbers, and that set is open.

So the eventual proof is extremely easy: the invertible matrices are the inverse image of the open set  under the continuous function "determinant of". How did we find this proof? By not starting from scratch: instead, we spent a short time thinking about well-known facts connected with the concepts that we were trying to relate.

under the continuous function "determinant of". How did we find this proof? By not starting from scratch: instead, we spent a short time thinking about well-known facts connected with the concepts that we were trying to relate.

I hinted earlier that one could use the fact that open sets are complements of closed sets, and indeed that gives an alternative, fairly similar approach. We would like to prove that a limit of non-invertible matrices is non-invertible. Again, once we think of using determinants this becomes easy. It is enough to prove that a limit of matrices with zero determinant has zero determinant. This will certainly be the case if the determinant is continuous – which it is.

See also

There are some areas of mathematics where this advice applies in a very extreme way: machinery is developed in order to save people the effort of writing out essentially the same argument again and again. A good example is algebraic topology, and indeed the article If your topological invariant takes integer values, they may well be homology classes in disguise could be summarized as giving the advice not to prove topological theorems from scratch, but to use the well-developed machinery of homology and cohomology instead.

Tricki

Tricki

Comments

Post new comment

(Note: commenting is not possible on this snapshot.)