Quick description

Cantor's continuum hypothesis is perhaps the most famous example of a mathematical statement that turned out to be independent of the Zermelo-Fraenkel axioms. What is less well known is that the continuum hypothesis is a useful tool for solving certain sorts of problems in analysis. It is typically used in conjunction with transfinite induction. This article explains why it comes up.

Example 1

Given two infinite sets  and

and  of positive integers, let us say that

of positive integers, let us say that  is sparser than

is sparser than  if the ratio of the size of

if the ratio of the size of  to the size of

to the size of  tends to zero as

tends to zero as  tends to infinity. The relation "is sparser than" is a partial order on the set of all infinite subsets of

tends to infinity. The relation "is sparser than" is a partial order on the set of all infinite subsets of  . Is there a totally ordered collection of sets (under this ordering) such that every infinite subset of

. Is there a totally ordered collection of sets (under this ordering) such that every infinite subset of  contains one of them as a subset?

contains one of them as a subset?

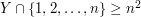

Let us see how to solve this question rather easily with the help of transfinite induction and the continuum hypothesis. These two techniques take us as far as the first line of the proof, after which a small amount of work is necessary. But here is the first line that gets us started. By the well-ordering principle we can find a one-to-one correspondence between the set of all infinite subsets of  and the first ordinal of cardinality

and the first ordinal of cardinality  . By the continuum hypothesis, this is also the first uncountable ordinal. Therefore,

. By the continuum hypothesis, this is also the first uncountable ordinal. Therefore,

Let us well-order the infinite subsets of

in such a way that each one has countably many predecessors.

in such a way that each one has countably many predecessors.

Once we have written this, we are well on the way to a just-do-it proof. Let us build a collection of sets  that is totally ordered (and in fact well-ordered) by the sparseness relation, and let us take care of each infinite subset of

that is totally ordered (and in fact well-ordered) by the sparseness relation, and let us take care of each infinite subset of  in turn. For each countable ordinal

in turn. For each countable ordinal  let us write

let us write  for the infinite subset of

for the infinite subset of  that we have associated with

that we have associated with  . Now let

. Now let  be a countable ordinal and suppose that for every

be a countable ordinal and suppose that for every  we have chosen a subset

we have chosen a subset  , and suppose that we have done this in such a way that whenever

, and suppose that we have done this in such a way that whenever  the set

the set  is sparser than the set

is sparser than the set  . We would now like to find a subset

. We would now like to find a subset  of

of  that is sparser than every single

that is sparser than every single  with

with  .

.

Because there are only countably many sets  with

with  , it is straightforward to do this by means of a diagonal argument. Here is one way of carrying it out. For each

, it is straightforward to do this by means of a diagonal argument. Here is one way of carrying it out. For each  , let

, let  be the function from

be the function from  to

to  that takes

that takes  to the

to the  th element of

th element of  . Now enumerate these functions as

. Now enumerate these functions as  , define a diagonal function

, define a diagonal function  by taking

by taking  to be the smallest element of

to be the smallest element of  that is larger than both

that is larger than both  and

and  . Then let

. Then let  . Then if

. Then if  , we know that

, we know that  for each

for each  that corresponds to one of the functions

that corresponds to one of the functions  . This proves that

. This proves that  is sparser than every

is sparser than every  with

with  . Therefore, we can construct a collection of infinite sets

. Therefore, we can construct a collection of infinite sets  , one for each countable ordinal

, one for each countable ordinal  , such that

, such that  is sparser than

is sparser than  whenever

whenever  , and

, and  for every

for every  . This completes the proof.

. This completes the proof.

General discussion

What are the features of this problem that made the continuum hypothesis an appropriate tool for solving it? To answer this, let us imagine what would have happened if we had not used the continuum hypothesis. Once we had decided to solve the problem in a just-do-it manner, we would have well-ordered the subsets of  , and tried, as above, to find a subset

, and tried, as above, to find a subset  for each

for each  , with these subsets getting sparser and sparser all the time. But if the number of predecessors of some

, with these subsets getting sparser and sparser all the time. But if the number of predecessors of some  had been uncountable, then we would not have been able to apply a diagonal argument.

had been uncountable, then we would not have been able to apply a diagonal argument.

So the feature of the problem that caused us to want to use the continuum hypothesis was that we were engaging in a transfinite construction, and in order to be able to continue we used some result that depended on countable sets being small. In this case, it was the result that for any countable collection of sets there is a set that is sparser than all of them, but it could have been another assertion about countability, such as that a countable union of sets of measure zero has measure zero.

There is sometimes an alternative to using the continuum hypothesis, which is to use Martin's axiom. Very roughly speaking, Martin's axiom tells you that the kinds of statements one likes to use about countable sets, such as that a countable union of sets of measure zero has measure zero, continue to be true if you replace "countable" by any cardinality that is strictly less than  . For example, it would give you a strengthening of the Baire category theorem that said that an intersection of fewer than

. For example, it would give you a strengthening of the Baire category theorem that said that an intersection of fewer than  dense open sets is non-empty. If the continuum hypothesis is true, then this is of course not a strengthening, but it is consistent that Martin's axiom is true and the continuum hypothesis false.

dense open sets is non-empty. If the continuum hypothesis is true, then this is of course not a strengthening, but it is consistent that Martin's axiom is true and the continuum hypothesis false.

Example 2

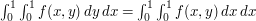

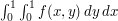

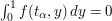

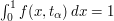

A special case of Fubini's theorem is the statement that if  is a bounded measurable function defined on the unit square

is a bounded measurable function defined on the unit square ![[0,1]^2](../images/tex/c7705367e888bf213397b0856931f107.png) , then

, then  . That is, to integrate

. That is, to integrate  , one can first integrate over

, one can first integrate over  and then over

and then over  , or first over

, or first over  and then over

and then over  , and the result will be the same in both cases.

, and the result will be the same in both cases.

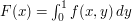

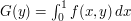

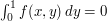

One might ask whether it is necessary for  to be measurable. Perhaps it is enough if

to be measurable. Perhaps it is enough if  is a measurable function of

is a measurable function of  for fixed

for fixed  and vice versa, and that the functions

and vice versa, and that the functions  and

and  are measurable as well. That would be enough to ensure that both the double integrals

are measurable as well. That would be enough to ensure that both the double integrals  and

and  exist.

exist.

If you try to prove that these two double integrals are equal under the weaker hypothesis, you will find that you do not manage. So it then makes sense to look for a counterexample.

An initial difficulty one faces is that the properties that this counterexample is expected to have are somewhat vague: we want a function  of two variables such that certain functions derived from it are measurable. But this measurability is a rather weak property, so it doesn't give us much of a steer towards any particular construction. This is just the kind of situation where it is a good idea to try to prove a stronger result. For example, instead of asking for

of two variables such that certain functions derived from it are measurable. But this measurability is a rather weak property, so it doesn't give us much of a steer towards any particular construction. This is just the kind of situation where it is a good idea to try to prove a stronger result. For example, instead of asking for  to be bounded, we could ask for it to take just the values

to be bounded, we could ask for it to take just the values  and

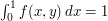

and  . And instead of asking for the two double integrals to be different, we could ask for something much more extreme: that

. And instead of asking for the two double integrals to be different, we could ask for something much more extreme: that  for every

for every  and

and  for every

for every  . (Note that if we can do this, then we will easily be able to calculate the two double integrals. Note also that we won't have to think too hard about making

. (Note that if we can do this, then we will easily be able to calculate the two double integrals. Note also that we won't have to think too hard about making  non-measurable: that will just drop out from the fact that Fubini's theorem doesn't apply.)

non-measurable: that will just drop out from the fact that Fubini's theorem doesn't apply.)

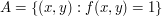

If  takes just the values

takes just the values  and

and  , then we could let

, then we could let  . Then the condition we are aiming for is that for every

. Then the condition we are aiming for is that for every  the set

the set  has measure 0 and for every

has measure 0 and for every  the set

the set  has measure 1.

has measure 1.

It is at this point that we might think of using transfinite induction. Let us well-order the interval ![[0,1]](../images/tex/ccfcd347d0bf65dc77afe01a3306a96b.png) and try to ensure the above conditions one number at a time.

and try to ensure the above conditions one number at a time.

Suppose then that each real number in ![[0,1]](../images/tex/ccfcd347d0bf65dc77afe01a3306a96b.png) has been indexed by some ordinal

has been indexed by some ordinal  and write

and write  for the real number indexed by

for the real number indexed by  . Suppose that for each

. Suppose that for each  we have decided what the cross-sections

we have decided what the cross-sections  and

and  are going to be. And suppose that we have done this in such a way that

are going to be. And suppose that we have done this in such a way that  always has measure 0 and

always has measure 0 and  always has measure 1. Now we would like to choose the cross-sections

always has measure 1. Now we would like to choose the cross-sections  and

and  with the same property.

with the same property.

We do not have complete freedom to do this, since we have already decided for each  whether

whether  belongs to

belongs to  and whether

and whether  belongs to

belongs to  . And this could be a problem: what if the set

. And this could be a problem: what if the set  is not a set of measure zero?

is not a set of measure zero?

But we can solve this problem by ensuring that initial segments of the well-ordering of ![[0,1]](../images/tex/ccfcd347d0bf65dc77afe01a3306a96b.png) do have measure zero. How do we do this? We use the continuum hypothesis. Once again, we make the first line of our proof the following:

do have measure zero. How do we do this? We use the continuum hypothesis. Once again, we make the first line of our proof the following:

Well-order the closed interval

![[0,1]](../images/tex/ccfcd347d0bf65dc77afe01a3306a96b.png) in such a way that each element has countably many predecessors.

in such a way that each element has countably many predecessors.

If we do this, then we find that for each  we have made only countably many decisions about which points

we have made only countably many decisions about which points  and

and  belong or do not belong to

belong or do not belong to  . Therefore, we can decide about the rest of the points as follows: for every

. Therefore, we can decide about the rest of the points as follows: for every  such that the value of

such that the value of  is not yet assigned, set it to be

is not yet assigned, set it to be  , and for every

, and for every  such that the value of

such that the value of  is not yet assigned, set it to be

is not yet assigned, set it to be  . This guarantees that

. This guarantees that  for all but countably many

for all but countably many  , an

, an  for all but countably many

for all but countably many  . Therefore, for every

. Therefore, for every  we have

we have  and

and  .

.

General discussion

If we look at the function  that we have just defined, then we see that it has the following simple description. If

that we have just defined, then we see that it has the following simple description. If  in the well-ordering we placed on

in the well-ordering we placed on ![[0,1]](../images/tex/ccfcd347d0bf65dc77afe01a3306a96b.png) then

then  and if

and if  in this well-ordering then

in this well-ordering then  . We could have omitted most of the above discussion and simply defined

. We could have omitted most of the above discussion and simply defined  in this way in the first place, pointing out that for each

in this way in the first place, pointing out that for each  ,

,  for all but at most countably many

for all but at most countably many  , and for each

, and for each  ,

,  for all but at most countably many

for all but at most countably many  . The point of the discussion was to show how this clever example can arise as a result of a natural thought process.

. The point of the discussion was to show how this clever example can arise as a result of a natural thought process.

However, it is also worth remembering the example, since it gives us a second quite common way of using the continuum hypothesis. To clarify this, let us summarize the two ways we have discussed.

Method 1. In a proof by transfinite induction on a set  of cardinality

of cardinality  , start with the line, "Let

, start with the line, "Let  be a well-ordering of

be a well-ordering of  such that every element has countably many predecessors," or equivalently, "Take a bijection between

such that every element has countably many predecessors," or equivalently, "Take a bijection between  and the first uncountable ordinal

and the first uncountable ordinal  , and let

, and let  denote the element of

denote the element of  that corresponds to the (countable) ordinal

that corresponds to the (countable) ordinal  ."

."

Method 2. Let  be a well-ordering of the reals, or some subset of the reals such as

be a well-ordering of the reals, or some subset of the reals such as ![[0,1]](../images/tex/ccfcd347d0bf65dc77afe01a3306a96b.png) , such that each element has countably many predecessors, and use this well-ordering as the basis for some other construction.

, such that each element has countably many predecessors, and use this well-ordering as the basis for some other construction.

It is also worth noting that the function  defined in Example 2 is very similar in spirit to the double sequence

defined in Example 2 is very similar in spirit to the double sequence  , where

, where  if

if  and

and  otherwise. This sequence has the property that

otherwise. This sequence has the property that  for every

for every  and

and  for every

for every  . It is discussed as Example 5 in the article on just-do-it proofs.

. It is discussed as Example 5 in the article on just-do-it proofs.

Tricki

Tricki

Comments

Post new comment

(Note: commenting is not possible on this snapshot.)