Quick description

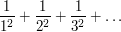

If you are trying to calculate the sum  , then sometimes it is possible to spot another sequence

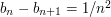

, then sometimes it is possible to spot another sequence  such that

such that  and

and  . If that is the case, then your sum is equal to

. If that is the case, then your sum is equal to  .

.

Prerequisites

The definition of an infinite sum.

|

Example 1

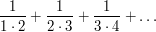

An infinite sum that is well known to be straightforward to calculate exactly is the sum

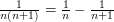

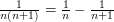

The usual technique for summing this is to observe that  , from which it follows that

, from which it follows that

which tends to 1 as  tends to infinity.

tends to infinity.

This points to a circumstance in which an infinite sum can be evaluated exactly: we can work out the discrete analogue of an "antiderivative": that is, we have a sequence  and can spot a nice sequence

and can spot a nice sequence  such that

such that  . Of course,

. Of course,  must in that case be the partial sum

must in that case be the partial sum  (up to an additive constant). So is this really making the more or less tautologous observation that if you have a nice formula for the partial sums and can see easily what they converge to then you are done?

(up to an additive constant). So is this really making the more or less tautologous observation that if you have a nice formula for the partial sums and can see easily what they converge to then you are done?

It isn't quite, because we could have spotted that  without working out any partial sums.

without working out any partial sums.

General discussion

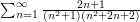

Let us try to understand better why the above trick is not a universal method for calculating all infinite sums, by contrasting the above example with the example of the sum

Can we find some sequence  such that

such that  ? It seems difficult just to spot such a sequence, so instead let us try to be systematic about it. It's not quite clear how to pick

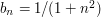

? It seems difficult just to spot such a sequence, so instead let us try to be systematic about it. It's not quite clear how to pick  , so let us set it to be

, so let us set it to be  . Then

. Then  , so

, so  . Next,

. Next,  , so

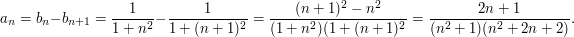

, so  . In general, we find that

. In general, we find that  . Since we also want

. Since we also want  to tend to zero, this tells us what

to tend to zero, this tells us what  must be:

must be:  , precisely the sum we were trying to calculate!

, precisely the sum we were trying to calculate!

The reason the technique worked in the example above was that the partial sums turned out to have a nice formula.

. This tends to

. This tends to  as

as  tends to infinity. Now let

tends to infinity. Now let

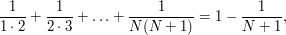

. A smart student will use partial fractions to discover that this is a telescoping sum and will end up with the answer

. A smart student will use partial fractions to discover that this is a telescoping sum and will end up with the answer  .

.If this method works, it is a bit like managing to calculate an integral by antidifferentiating the integrand.

Tricki

Tricki

Comments

Post new comment

(Note: commenting is not possible on this snapshot.)