Quick description

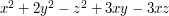

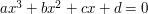

When faced with a expression involving both purely quadratic terms (e.g.  ) and linear terms (e.g.

) and linear terms (e.g.  ) in one or more variables

) in one or more variables  , translate the variable

, translate the variable  in order to absorb the linear terms into the quadratic ones. This can create additional constant terms, but all other things being equal, constant terms are preferable to deal with than linear terms.

in order to absorb the linear terms into the quadratic ones. This can create additional constant terms, but all other things being equal, constant terms are preferable to deal with than linear terms.

Prerequisites

High school algebra

Example 1: The quadratic formula

This is the classic example of the completing the square trick. Suppose one wants to find all solutions to the quadratic equation

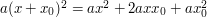

where  are parameters. The linear term prevents one from solving this equation directly; however, observe that

are parameters. The linear term prevents one from solving this equation directly; however, observe that  for any shift

for any shift  . Thus, (assuming

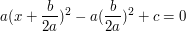

. Thus, (assuming  ) if one picks

) if one picks  , one can absorb the linear factor into the quadratic one, obtaining an equation

, one can absorb the linear factor into the quadratic one, obtaining an equation

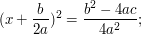

which only involves quadratic and constant terms. Now, the rules of high school algebra can solve this equation. Dividing by  and rearranging, one gets

and rearranging, one gets

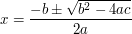

taking square roots, we arrive at the famous formula

for the two solutions to the quadratic equation, bearing in mind that the square root may well be imaginary if  is negative. (For the

is negative. (For the  , the above formula does not make sense, but in that case the equation degenerates to the linear equation

, the above formula does not make sense, but in that case the equation degenerates to the linear equation  , which has one solution

, which has one solution  , unless

, unless  managed to degenerate to zero also, in which case there are no solutions when

managed to degenerate to zero also, in which case there are no solutions when  and an infinite number of solutions when

and an infinite number of solutions when  .)

.)

See "how to solve quadratic equations" for more discussion.

Example 2

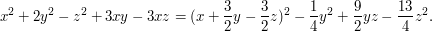

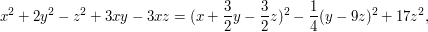

A quadratic form is an expression like  . That particular example is a quadratic form on

. That particular example is a quadratic form on  , and in general a quadratic form over

, and in general a quadratic form over  is a function

is a function  obtained by taking a symmetric bilinear form

obtained by taking a symmetric bilinear form  on

on  and defining

and defining  to be

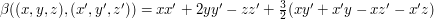

to be  . In the example above, the bilinear form is

. In the example above, the bilinear form is  .

.

It is often convenient to diagonalize a quadratic form, which means writing it as a linear combination of squares of linearly independent linear forms. Let us do this for the example above, by completing the square.

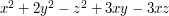

Our aim will be to achieve this by removing from  the square of a linear form in such a way that

the square of a linear form in such a way that  is no longer involved. To do this, we first pick out the terms that involve

is no longer involved. To do this, we first pick out the terms that involve  , which are

, which are  . We then try to find a linear form

. We then try to find a linear form  such that when you square it the terms involving

such that when you square it the terms involving  are precisely these ones. With a bit of experience in completing the square, we know that

are precisely these ones. With a bit of experience in completing the square, we know that  will do the job. Indeed,

will do the job. Indeed,

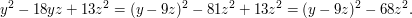

Therefore,

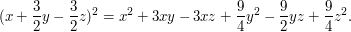

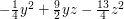

We would now like to finish this process by diagonalizing  , which we can make look slightly nicer by writing it as

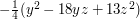

, which we can make look slightly nicer by writing it as  . Completing the square again, we find that

. Completing the square again, we find that

Plugging this in, we deduce that

and the quadratic form has been diagonalized.

Example 3

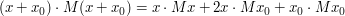

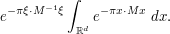

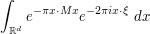

Completing the square can be used to compute the Fourier transform of gaussians. In one dimension, this was done in "Use rescaling or translation to normalize parameters"; we do the multi-dimensional case here. Specifically, let us compute the Fourier transform

of the Gaussian  , where

, where  is a positive definite real symmetric

is a positive definite real symmetric  matrix, and

matrix, and  . Observe that

. Observe that

for all  . Thus, if we pick

. Thus, if we pick  , we can complete the square and rewrite (1) as

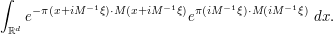

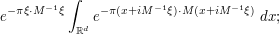

, we can complete the square and rewrite (1) as

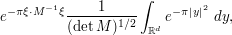

The constant term  , being independent of

, being independent of  , can be pulled out of the integral and simplified, leaving us with

, can be pulled out of the integral and simplified, leaving us with

contour shifting in each of the  variables of integration separately then allows us to rewrite this as♦

variables of integration separately then allows us to rewrite this as♦

Making the change of variables  to normalize the integrand, we can simplify this as

to normalize the integrand, we can simplify this as

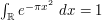

which by factoring the integral and using the standard integral  (proven at square and rearrange) simplifies to

(proven at square and rearrange) simplifies to

first to place it in a normal form; it is instructive to see how both computations end up at the same answer at the end of the day.

first to place it in a normal form; it is instructive to see how both computations end up at the same answer at the end of the day.Example 4

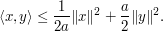

The standard proof of the Cauchy-Schwarz inequality  in a Hilbert space (which we take here to be real, for simplicity) can be viewed as a variant of the completing-the-square trick, but now one converts linear term into quadratic and constant terms rather than vice versa. Indeed, since

in a Hilbert space (which we take here to be real, for simplicity) can be viewed as a variant of the completing-the-square trick, but now one converts linear term into quadratic and constant terms rather than vice versa. Indeed, since

for any  , we can write

, we can write

for any  ; in particular,

; in particular,

If we then optimize in  , we obtain the Cauchy-Schwarz inequality.

, we obtain the Cauchy-Schwarz inequality.

Example 5

Suggestions welcome!

General discussion

Completing the square can also be done in several variables, whenever one is adding a quadratic form  to a linear form

to a linear form  plus some constant terms, provided that the quadratic form is non-degenerate (this is analogous to the

plus some constant terms, provided that the quadratic form is non-degenerate (this is analogous to the  condition in the quadratic formula).

condition in the quadratic formula).

The method also works to some extent for higher degree polynomials, but is significantly weaker. For instance, with cubic equations  , one can complete the cube to eliminate the quadratic factor

, one can complete the cube to eliminate the quadratic factor  (at the expense of modifying the lower order terms

(at the expense of modifying the lower order terms  ), but the linear term remains, and further tricks are needed to solve this equation. See "How to solve cubic and quartic equations".

), but the linear term remains, and further tricks are needed to solve this equation. See "How to solve cubic and quartic equations".

Tricki

Tricki

Comments

Possible further examples

Wed, 29/04/2009 - 18:57 — gowersI have a couple of suggestions. One is to do a Gaussian computation in this article. A natural example is to take a bivariate normal random variable and create out of

and create out of  and

and  two linear combinations

two linear combinations  and

and  that are linearly independent. That should perhaps come after my second suggestion, which is simply to explain how to diagonalize a quadratic form (by giving a few examples). If I have a spare moment, I may add these examples.

that are linearly independent. That should perhaps come after my second suggestion, which is simply to explain how to diagonalize a quadratic form (by giving a few examples). If I have a spare moment, I may add these examples.

The second one is one I care about because a lot of people I teach seem to have the impression that the only way of diagonalizing a quadratic form is to diagonalize the associated symmetric matrix.

Incidentally, I'm all in favour of using the same example in more than one article, so there might be a case for having the Fourier transform of a Gaussian done here as well.

completing the square to prove inequalities

Wed, 29/04/2009 - 21:55 — Anonymous (not verified)I've seen this used multiple times, in a context similar to the usual proof of Cauchy-Schwarz inequality, where completing the square of a real-valued expression shows that some grouping of terms is non-negative.

Thanks for the suggestions!

Wed, 29/04/2009 - 23:24 — taoI put up some quick examples to reflect them. Feel free to edit, of course.

Inline comments

The following comments were made inline in the article. You can click on 'view commented text' to see precisely where they were made.

Contour shifting

Thu, 30/04/2009 - 10:22 — gowersI've started an article about making complex substitutions in integrals. My guess is that this is what you mean by contour shifting, but I have not put this link in yet because I am not quite sure. If it is, and if this is the standard name for the technique, then I'll add a remark to that effect to the article.

Contour shifting

Thu, 30/04/2009 - 18:28 — taoHmm, I thought this was a common term, but I just googled for it and the first mathematical occurrence of it was... one of my own web pages. But "shifting the contour" is a reasonably common term. It's not _quite_ the same as complex substitution (it's based on Cauchy's theorem for contour integrals, rather than the change of variables formula), but it of course combines very well with such substitutions. But it also can be used independently of complex substitution, e.g. to evaluate integrals such as by shifting the contour to a big semicircle etc.

by shifting the contour to a big semicircle etc.

I link to this nonexistent article in a couple other places, so I think I'll start a stub on it, and perhaps return to it later.

This article is

Fri, 21/08/2009 - 02:02 — boumbiThis article is unintelligible.

How can it be possible to transform the method of completing the square into such a "charabia".

By the way, a(x+x_0)^2 = ax^2 + 2ax x_0 + x_0^2 is false.

I don't find the article

Sun, 23/08/2009 - 23:14 — olofI don't find the article unintelligible at all; perhaps you could clarify what you find problematic.

Also, editing a page to correct typos (like the missing coefficient you spotted) is very simple: just click on edit at the top of the article. I've corrected it here.

Post new comment

(Note: commenting is not possible on this snapshot.)